新闻资讯

-

英联锂能与德国Rhenus Automotive集…

2024.01.171月9日上午,英联锂能总经理蒋英与Rhenus Automotive(瑞诺司汽车)集团全球CEO、…

-

悦动十月金秋 趣享运动风采

2023.10.31金秋十月,秋高气爽。10月28日,电联集团举办了第一届职工趣味运动会,本次活动旨…

-

深化合作 共赢未来

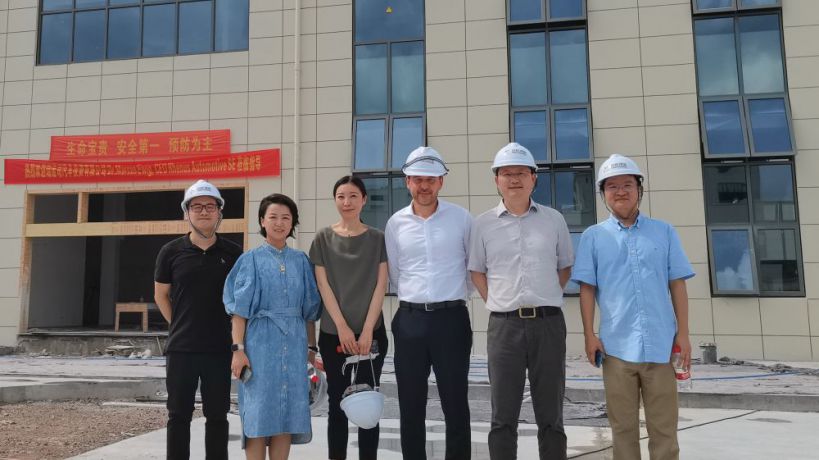

2023.07.267月18日,德国Rhenus Automotive、众和集团一行应邀参观考察浙江英联锂能新能源科…

-

强化东西合作 促进共同发展

2023.04.25日前,青海省茫崖市委政府主要领导组团来杭进行招商考察,期间市委书记柳雪莲…

-

英联锂能与德国Rhenus Automotive集团签订合作项目协议

1月9日上午,英联锂能总经理蒋英与Rhenus Automotive(瑞诺司汽车)集团全球CEO、瑞诺司汽车投资(上海…

-

悦动十月金秋 趣享运动风采

金秋十月,秋高气爽。10月28日,电联集团举办了第一届职工趣味运动会,本次活动旨在为员工们提供一个展…

-

深化合作 共赢未来

7月18日,德国Rhenus Automotive、众和集团一行应邀参观考察浙江英联锂能新能源科技有限公司。本次考察…

-

强化东西合作 促进共同发展

日前,青海省茫崖市委政府主要领导组团来杭进行招商考察,期间市委书记柳雪莲率党政代表团莅临我司…

-

共学习 同进步-首期内训师培训班开讲

为加强团队建设,提升内部培训水平,集团在开年组建了第一支内训师队伍。为发挥内训师队伍的作用,提…

-

凝心聚力 克难奋进 全力推进高质量发展

2022年7月23日-25日,电联集团2022年中工作会议在梅城举行,各事业部、二级集团、直管单位围绕二轮…